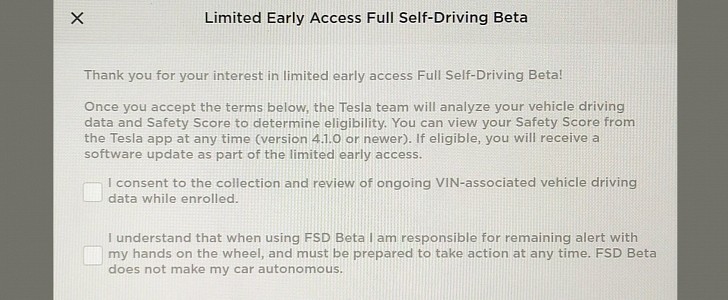

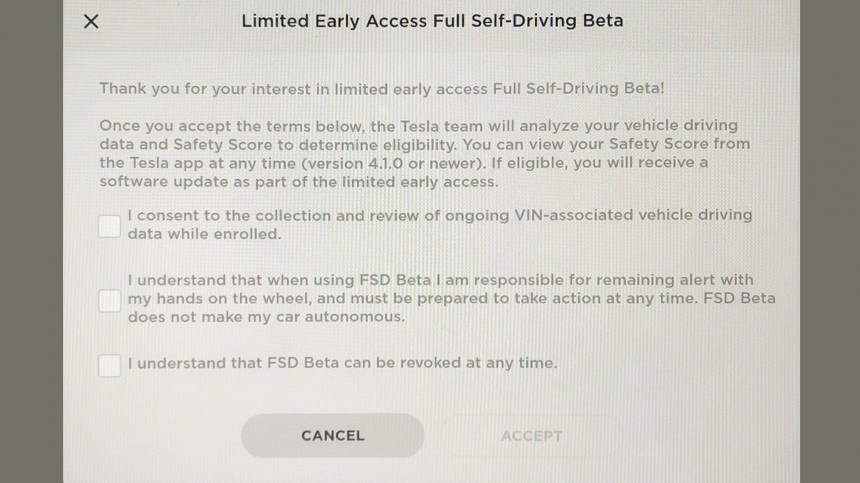

In our text about it, we mentioned the five “safety” factors Tesla chose to measure. As the screenshot below tells, the company has carefully written its disclaimer to say that “FSD Beta does not make my car autonomous.” It would probably be weird to say that “Full Self-Driving Beta does not make my car autonomous.”

Philip Koopman was quick to correct Tesla on the way it named the factors. According to the autonomous vehicle safety expert and associate professor at Carnegie Mellon University, the metrics are actually risk factors, helpful for determining insurance policies. They would not be as valuable to assess if a person drives safely or not.

Missy Cummings agreed with Koopman that these rules might lead to more dangerous driving behavior than without the Safety Score Beta. According to the director of HAL (Humans and Autonomy Lab), the impact of abuse and misuse of these metrics are well documented and “will undoubtedly be revealed via accident investigations or lawsuits.”

Tweets about issues to achieve high metrics are now spreading. One user said he had already received a lot of swearing for the way he was driving. Some others claim that using Autopilot lowers their safety score, which is the mother of all ironies. Tesla’s own Level 2 ADAS makes it harder for people to use another Level 2 ADAS from the company – one that requires even more attention than everyday driving would.

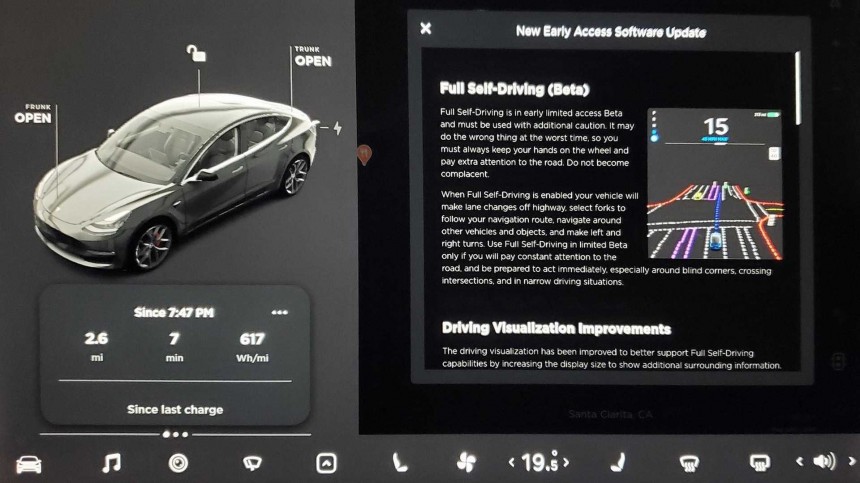

With such implications on the table, it is difficult to understand all the effort people are making to have the beta software. When they paid up to $10,000 for that, they probably expected to own a car that drives by itself – an “appreciating asset,” as Musk put it. If even Tesla makes people recognize that is not the case when they request FSD Beta, the frenzy surrounding such requirements is beyond comprehension. Worse still, it is concerning for everyone around those vehicles to be exposed to them if anything goes wrong.

So calling this a "safety" score is misleading. This is a risk score. Assuming it is valid, it is based on correlative analysis as is typical for insurance scores.

— Philip Koopman (@PhilKoopman) September 25, 2021

Correlation is not causation.

Obxkcd: https://t.co/05wWSlX8ve pic.twitter.com/6878ypuc9b

Did anyone @tesla consult w/ any human factors engineers? Both the creation of & possible abuses/misuses of such a metric are well documented. Both the actual formula & how it might contribute to accidents will undoubtably be revealed via accident investigations or lawsuits

— Missy Cummings (@missy_cummings) September 27, 2021

Lol pic.twitter.com/jUI28xd0Lq

— AutonoDriver (@AutonoD) September 26, 2021

Testers should be trained and getting paid to do the work for $TSLA. Instead, they have donated $10k for the privilege of intervening when the car behaves like it's drunk. https://t.co/QnqlvJ367f

— TR (@Tweet_Removed) September 26, 2021